How to Bypass Codex Usage Limits in 2026

Why OpenAI Codex throttles you, and the API setup that doesn't

Start Building with Hypereal AI

Access Kling, Flux, Sora, Veo & more through a single API. Free credits to start, scale to millions.

No credit card required • 100k+ developers • Enterprise ready

How to Bypass Codex Usage Limits in 2026

OpenAI Codex CLI is one of the most useful coding agents shipped this year — and one of the most aggressively rate-limited. If you actually use it for real work, you blow through the weekly cap by Wednesday and spend the rest of the week watching codex print "You've hit your usage limit" into your terminal.

This guide walks through what the limit actually is, why the obvious workarounds don't work, and the configuration most professional Codex users have switched to.

What the Codex usage limit actually looks like in 2026

Codex CLI is gated by your ChatGPT subscription tier:

| Plan | GPT-5-Codex weekly budget | Reset |

|---|---|---|

| Plus ($20/mo) | ~50–80 hrs of typical agent use | Weekly, rolling |

| Pro ($200/mo) | ~5× Plus | Weekly, rolling |

| Business / Edu | Per-seat, pooled | Weekly |

The exact numbers shift, but the structure doesn't: a single tool-heavy refactor session — the kind where you ask Codex to rewrite a module, run tests, fix the failures, and try again — can burn 4–6 hours of weekly budget in one sitting. Two or three of those a week and you're capped.

What does *not* work

"Unlimited Codex" reseller accounts on Telegram / Discord

Shared ChatGPT accounts. Banned within days, no refunds, sometimes outright phishing.

Multiple OpenAI accounts on one machine

Codex CLI binds the auth token to your local config. You can technically log out and back in, but OpenAI's billing side detects rapid account hopping and quietly throttles all of them.

"Jailbreak prompts" for Codex

Codex doesn't refuse on content; it refuses on quota. There is no prompt you can write that resets your weekly cap.

Switching to GPT-5 in ChatGPT instead

Different cap, same problem — and you lose Codex's tool-use loop, sandboxing, and IDE integration.

What actually works: point Codex at an OpenAI-compatible API

Codex CLI reads its endpoint and key from ~/.codex/config.toml. If you point it at an OpenAI-compatible API that isn't gated by your ChatGPT subscription, you're billed per token instead of per week — and you can keep coding when your "official" cap is empty.

Hypereal exposes the same model family (gpt-5, gpt-5-codex, gpt-5-mini) at a per-token rate that works out cheaper than ChatGPT Pro for most coders, and there's no weekly cap.

Setup (60 seconds)

- Sign up at hypereal.cloud and grab an API key.

- Edit

~/.codex/config.toml:

[model_providers.hypereal]

name = "Hypereal"

base_url = "https://api.hypereal.cloud/v1"

env_key = "HYPEREAL_API_KEY"

wire_api = "responses"

[profiles.hypereal]

model = "gpt-5-codex"

model_provider = "hypereal"

- Export your key:

export HYPEREAL_API_KEY=hyp_...

- Run Codex with the new profile:

codex --profile hypereal

You're now off the ChatGPT weekly budget entirely.

Cost comparison (real numbers)

A heavy week of Codex use — roughly what blows through the Plus weekly cap — works out to around 80–120M input tokens and 6–12M output tokens.

| Setup | Weekly cost |

|---|---|

| ChatGPT Plus (when not capped) | $20 (then throttled) |

| ChatGPT Pro | $200 fixed |

| Hypereal pay-per-token, same usage | ~$45–80 |

If you'd been about to upgrade from Plus → Pro just for the cap, the API route is usually 50–70% cheaper for the same throughput.

What stays the same

- Same

codexCLI binary. - Same MCP servers, sandbox config, project-level config.

- Same

gpt-5-codexmodel family. - Same code search / tool-use loop.

The only thing that changes is which provider Codex calls under the hood.

What's different

- No weekly cap.

- Pay per token consumed (roughly $0.50–$1.50 for a typical refactor session).

- One key works across

gpt-5-codex,gpt-5,claude-opus-4-7,claude-sonnet-4-6— handy if you switch the model behind Codex day-to-day.

FAQ

Is this against OpenAI's terms? No. You're not using your ChatGPT account at all in this setup; you're calling a separate provider's API from Codex CLI. Codex's source supports custom providers explicitly.

Will Codex still get tool-use right?

Yes. The wire_api = "responses" setting routes through the same Responses-API shape Codex expects, so tool calls, sandbox commands, and applies all behave identically.

Are GPT-5-Codex outputs the same? The model weights are the same. Differences in routing layer / context-window handling are negligible for coding work.

Free trial? Yes — new Hypereal accounts get trial credits, enough to run several real Codex sessions before paying.

Get started

If your weekly Codex cap has become the bottleneck on your engineering output, swapping providers is a 60-second fix. Sign up at hypereal.cloud, drop the snippet above into your config.toml, and finish the refactor you started Monday.

Related Articles

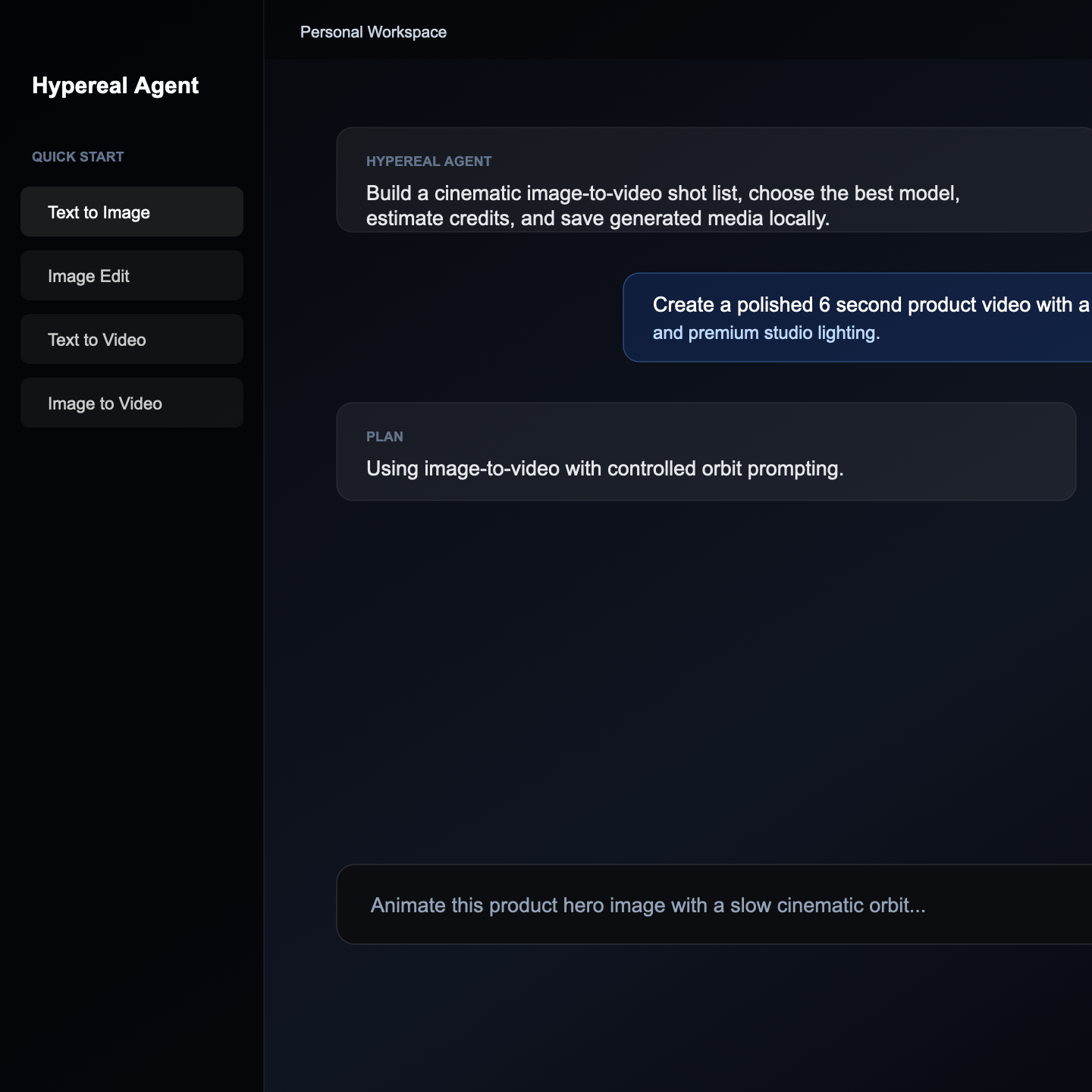

Download Hypereal Agent

Run a local AI media workspace for image generation, video prompts, model selection, credit tracking, and saved artifacts.